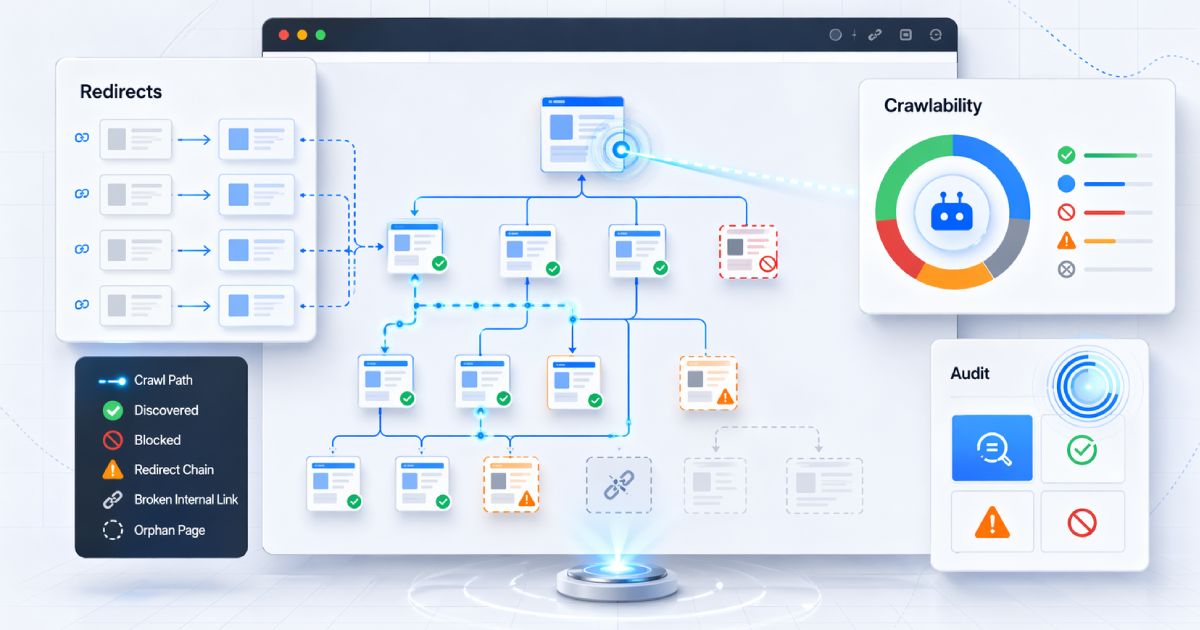

Most teams treat crawl control as a set-and-forget configuration detail. Someone adds a robots.txt file during the initial build, a meta robots tag gets dropped onto a few pages, and then nobody looks at any of it again until something goes wrong.

The problem is that crawl directives and indexing directives are different things, controlled by different mechanisms, processed at different layers of the stack. Confusing them causes pages to leak into search results unexpectedly, stay visible as bare URLs with no snippet, waste crawl budget on paths that do not matter, and create inconsistent search behavior across environments.

This guide breaks down the three primary crawl and indexing control mechanisms — robots.txt, the HTML meta robots tag, and the X-Robots-TagHTTP response header — so you know exactly which one to reach for and when.

TL;DR

- Use robots.txt to control crawling— whether a bot can fetch a URL.

- Use the meta robots HTML tag to control indexing of individual HTML pages.

- Use the X-Robots-Tag response header to control indexing for non-HTML files or to apply directives at the server / CDN level.

If you are already dealing with a robots.txt that may be misconfigured, start with 7 Critical robots.txt Mistakes That Are Silently Killing Your SEO for a focused troubleshooting guide.

The core concept: crawling vs indexing

Before choosing a mechanism, you need to understand the distinction these mechanisms are built on.

- Crawling is whether a search engine bot can fetch and read a URL. It is a transport-level question: can the bot make the request and receive the response?

- Indexing is whether that URL can be included in search results. It is a search-level decision: should this resource appear when someone searches for relevant terms?

These are independent operations. A page can be crawled without being indexed (the bot reads it but chooses not to include it). And critically, a URL can appear in search results withoutever being crawled — if other pages link to it, search engines can infer its existence and display it as a bare listing with no snippet.

The key rule

Every mistake in this space comes back to this confusion. The rest of this article shows which tool controls which layer — and what happens when you use the wrong one.

robots.txt: the crawler gatekeeper

robots.txt is a plain-text file placed at the root of your host (https://yourdomain.com/robots.txt). It follows the Robots Exclusion Protocol and tells compliant crawlers — Googlebot, Bingbot, and hundreds of others — which paths they may or may not fetch.

What robots.txt is good for

- Limiting crawler access to unimportant or high-volume URL patterns (faceted navigation, filters, sort parameters).

- Reducing unnecessary crawler traffic to admin panels, internal utility paths, or staging-like environments.

- Protecting crawl budget on large sites with deep URL structures.

- Pointing crawlers to your sitemap via the

Sitemap:directive.

What robots.txt is not good for

- Preventing indexing. Blocking crawl access does not remove a URL from results if it is linked elsewhere.

- Security. robots.txt is publicly readable. Anyone can view it. Non-compliant bots ignore it.

- Privacy. Disallowing a path actually advertises its existence to anyone reading the file.

Example

User-agent: * Disallow: /admin/ Disallow: /search? Sitemap: https://www.codeava.com/sitemap.xml

Both Google and Bing consume robots.txt directives, but crawler behavior can differ between engines. Always validate in the relevant webmaster tools and inspect real production responses — not just the file in your repo.

Meta robots: page-specific indexing rules in HTML

The meta robots tag lives inside the <head> of an HTML document. Unlike robots.txt, which controls whether a bot can fetch a page, meta robots controls what a bot should do with the page after fetching it.

This is the right mechanism when you want a specific page to remain accessible to users but stay out of search results.

Common use cases

- Thank-you and post-conversion pages.

- Internal site search result pages (thin, duplicative, low value).

- Temporary landing pages or campaign variants.

- Utility pages that should be crawlable but not appear in results.

Example

<meta name="robots" content="noindex, follow">

Common directive values

| Directive | Effect |

|---|---|

| noindex | Do not include this page in search results. |

| nofollow | Do not follow outbound links on this page for discovery or ranking. |

| noarchive | Do not show a cached copy of this page in results. |

| nosnippet | Do not display a text snippet or video preview in results. |

There is one critical dependency: the search engine must be able to crawl the page to see the meta robots tag. If the page is also blocked in robots.txt, the bot will never read the noindex instruction. Bing also supports the meta robots tag with the same standard directive values.

X-Robots-Tag: the response-header option developers forget

The X-Robots-Tagis an HTTP response header that carries the same indexing directives as the meta robots tag, but it is sent in the response headers rather than embedded in HTML. This makes it applicable to any resource type — not just HTML pages.

For developers, this is often the cleanest mechanism when you need to apply indexing rules at scale or to non-HTML content.

Common use cases

- PDFs, images, and downloadable documents that should not appear in search results.

- JSON or API responses that are publicly reachable but not intended for indexing.

- Server-level rules applied via Nginx, Apache, Next.js custom headers, edge middleware, or Cloudflare configurations.

- Large-scale deployment patterns where editing individual HTML files is impractical.

Example

X-Robots-Tag: noindex, noarchive

Bing supports X-Robots-Tag headers as well, so this mechanism works consistently across major search engines. The important caveat: always verify the header is actually present in production responses — not just configured in your server config.

You can use the CodeAva HTTP Headers Checker to inspect live response headers and confirm that X-Robots-Tag directives are being served as expected.

The fatal developer mistake: Disallow + noindex conflict

This is the single most common and most damaging crawl-control mistake in production, and it stems directly from the crawling-vs-indexing confusion.

Here is the scenario:

- A developer blocks a URL path in

robots.txtwith aDisallowrule. - The same developer (or a different team member) adds a

noindexmeta tag or X-Robots-Tag to the page, expecting it to be removed from search results. - Because robots.txt blocks crawling, Googlebot never fetches the page.

- Because Googlebot never fetches the page, it never reads the noindex instruction.

- The URL continues to appear in search results as a bare listing — no title, no snippet, just a URL — if other pages link to it.

noindex in robots.txt is not a supported solution

noindex inside robots.txt is not a supported directive. Even if you add it, Google will not process it. The only reliable way to prevent a page from being indexed is to allow crawling and serve the noindex instruction via the meta tag or X-Robots-Tag header.The developer takeaway: never combine a robots.txt Disallow with an expectation that a page-level noindex directive will still be processed. If you want a page removed from search results, allow crawling and use noindex. If you need to block crawling for other reasons, understand that the URL may still appear as a bare listing.

Decision matrix: which mechanism to use

Use this table as a quick reference when deciding how to handle a specific crawl or indexing scenario.

| Scenario | Recommended method | Why |

|---|---|---|

| Hide a PDF from search | X-Robots-Tag | Works for non-HTML resources via response headers. |

| Keep a thank-you page out of search | meta robots | Page-level HTML control; the bot crawls the page and reads the noindex tag. |

| Reduce crawler load on filtered URLs | robots.txt | Crawl-level control; prevents bots from fetching these paths at all. |

| Apply noindex to a large class of files via server config | X-Robots-Tag | Scalable response-level deployment; no per-file HTML edits needed. |

| Keep an API endpoint from being crawled | robots.txt + authentication if private | robots.txt handles compliant bots; authentication handles everything else. |

| Prevent a utility HTML page from indexing | meta robots | Lets bots crawl and process the noindex rule; page stays accessible to users. |

Practical implementation notes for modern stacks

Knowing which mechanism to use is half the problem. Getting it deployed correctly across your stack is the other half.

Next.js and server-rendered frameworks

In Next.js, you can set custom response headers in next.config.js (or next.config.ts) using the headers function, or via middleware for dynamic rules:

// next.config.js — static header rules

module.exports = {

async headers() {

return [

{

source: '/docs/:path*.pdf',

headers: [

{ key: 'X-Robots-Tag', value: 'noindex, noarchive' },

],

},

];

},

};CMS-level meta robots

Most modern CMS platforms (WordPress, Webflow, Sanity, Contentful) expose a meta robots field per page or template. Use it for per-page control. Do not hard-code the tag in a global template unless you intend it to apply everywhere.

Nginx and Apache

# Nginx — noindex all PDFs

location ~* \.pdf$ {

add_header X-Robots-Tag "noindex, noarchive" always;

}

# Apache — noindex all PDFs

<FilesMatch "\.pdf$">

Header set X-Robots-Tag "noindex, noarchive"

</FilesMatch>Cloudflare and edge-based header injection

Cloudflare Workers, Transform Rules, and Page Rules can all inject response headers. This is useful when you do not control the origin server's configuration directly. The same approach applies to Vercel Edge Middleware, Netlify headers, and other edge platforms.

The most important rule: verify in production

Configuration is not the same as deployment. Headers configured in a reverse proxy may be stripped by a CDN. Meta tags added in a CMS may be overridden by a plugin. robots.txt in your repo may differ from what is served at the domain root.

Always verify directives in the actual HTTP response. Use the HTTP Headers Checker to inspect live headers, and run a Website Audit to catch crawl-control issues across your site.

Conclusion

Crawl control is not one mechanism — it is three, each operating at a different layer:

- robots.txt controls crawling— whether a bot can fetch a URL.

- Meta robots controls indexingof HTML pages — whether a crawled page appears in results.

- X-Robots-Tag controls indexingat the response level — for non-HTML resources and server-wide rules.

Using the wrong one — or combining them incorrectly — creates silent problems that do not surface in error logs or monitoring dashboards. The only reliable approach is to choose the right control at the right layer, deploy it deliberately, and verify it in production.

Do not wait for conflicting directives to create indexing problems. Use the CodeAva Website Audit to spot crawl-control issues and the HTTP Headers Checker to verify X-Robots-Tag behavior in real responses.