The new site is live. The design system looks great. Launch day was uneventful. Two weeks later, organic traffic is down 30 percent, Search Console is flagging redirect errors, and a third of the old URL inventory has been classified as soft 404. The team thought the migration was done. Search engines disagree.

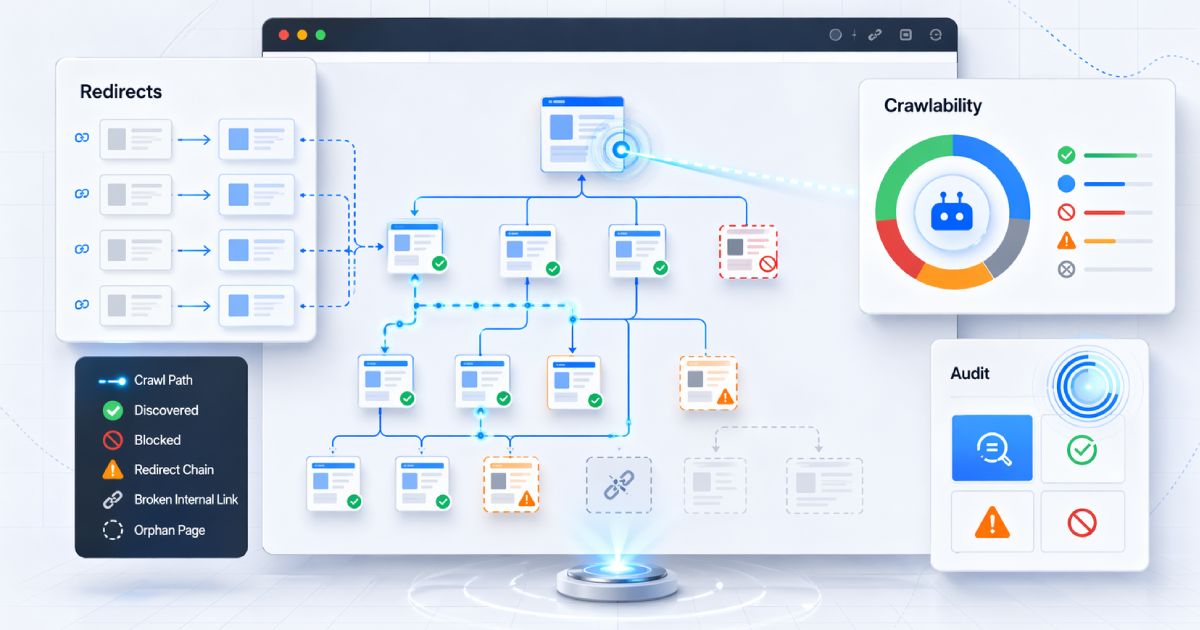

A migration is not finished when the new site is live. It is finished when crawlers can reliably discover, fetch, and consolidate the new URLs — and when the signals the new site sends are consistent with the signals the old site sent. Crawlability after a migration is an engineering problem with a short list of failure modes: redirect integrity, discovery mapping, robots access, canonical alignment, and monitoring.

This guide is a forensic post-migration audit. The order matters: broken redirects and blocked crawl paths make every other diagnosis unreliable, so they come first.

TL;DR

- A post-migration crawlability audit focuses on four pillars: redirect integrity, discovery (sitemaps and internal links), crawl access (robots.txt), and canonical alignment.

- Old URLs should return permanent server-side redirects directly to the best new equivalent, not a chain and not the homepage.

- The new XML sitemap must contain only canonical, indexable, 200-status URLs.

- Robots.txt must notaccidentally block the new structure — especially staging rules or overly broad disallow patterns.

- Canonicals, internal links, redirects, and sitemaps must all agree on the same preferred URLs.

- Monitor Search Console and crawl behavior for weeks after launch; consolidation is not instant.

Audit the live site the way a crawler sees it, not the way the design team sees it. The CodeAva URL Indexability Inspector follows the full redirect chain, reads the final response headers and HTML, extracts meta robots, X-Robots-Tag, rel=canonical, and hreflang, and checks robots.txt — which covers most of the per-URL signals this audit depends on. Pair it with the broader CodeAva Website Audit for a quick technical-hygiene snapshot of the homepage and key templates.

Why post-migration crawlability fails

A site move introduces uncertainty for crawlers. URLs change, structures change, sometimes domains change. Search engines need strong, consistent signals to map old URLs to new destinations and to reassign the authority those old URLs had accumulated.

Most post-migration crawl problems are not “the site is uncrawlable everywhere”. They are specific, recurring failures that cluster around a handful of templates or URL patterns:

- A redirect rule that forgot one URL pattern, so 8 percent of old inventory returns 404.

- A canonical template that still hardcodes the old domain on some pages.

- A staging-mode robots.txt that shipped to production on launch day.

- A sitemap generator that still lists the pre-migration URL set.

- A redirect chain that hops through two intermediate URLs before reaching the new destination.

The fix is not to guess. It is to audit each pillar in order, one template and one URL sample at a time, and remove the mixed signals.

Pillar 1: redirect integrity

Permanent moves need permanent server-side redirects. That means 301 (or 308, which preserves the HTTP method) — not 302, not 307, not client-side JavaScript redirects. Old URLs should resolve to their final new destination in a single hop whenever the architecture allows.

Pass vs. fail patterns

A clean migration redirect map looks like this:

# Pass: direct 1:1 map to the best new equivalent

GET /old/product/blue-widget-v2 → 301 → /products/blue-widget

# Pass: consolidated move when the old page is genuinely merged

GET /old/category/blue-widgets → 301 → /category/widgets?color=blue

# Fail: redirect chain adds latency and auditing noise

GET /old/product/blue-widget-v2 → 301 → /products/blue-widget-v2

→ 301 → /products/blue-widget

# Fail: irrelevant destination creates soft-404 risk

GET /old/product/discontinued → 301 → / (homepage)

GET /old/product/discontinued → 301 → /products (unrelated)

# Fail: temporary redirect for a permanent move

GET /old/product/blue-widget-v2 → 302 → /products/blue-widgetGoogle can follow redirect chains up to a reasonable limit and will usually consolidate signals eventually, but direct redirects resolve faster, load fewer bytes, and are much easier to audit. Every unnecessary hop is a place something can go wrong — a regex mistake, a canonicalisation mismatch, a missed query string.

Validate redirects at the HTTP layer, not in a browser. Browsers hide the chain. A tool that follows and prints the hop list — such as the URL Indexability Inspector or a curl -ILon each representative old URL — gives you the truth about what search engines see.

Pillar 2: discovery mapping with sitemaps and internal links

Once redirects are clean, the next question is: can crawlers find the new URLs efficiently? Discovery happens through two main channels — the XML sitemap and the site’s own internal link graph.

Sitemap hygiene

The new sitemap must contain only URLs that meet all of the following:

- Return HTTP 200 directly (no redirects, no errors).

- Are allowed by robots.txt.

- Are not

noindexvia meta robots or X-Robots-Tag. - Self-reference via canonical, or are the canonical target of another URL.

- Use absolute URLs, not relative paths.

Anything that fails those checks pollutes the sitemap and adds noise to Search Console’s coverage reports. A common post-migration mistake is carrying over the old sitemap generator verbatim, so the file still contains URLs that now redirect or 404.

For deeper sitemap guidance, see XML Sitemap Best Practices. For a quick live validation of the production file, the CodeAva Sitemap Checker parses the XML, flags duplicates, relative URLs, and malformed entries, and shows a per-URL list you can scan for post-migration noise.

Internal links

Internal links are the most-trusted discovery signal a site controls directly. After a migration, every internal link that still points to an old URL forces crawlers through an extra redirect hop and sends a weak signal about which URL is preferred.

Audit internal links for:

- Navigation menus (header, footer, mega menus)

- Breadcrumbs and related-content rails

- Pagination and facet links

- In-body links inside CMS content

- Hero CTAs and marketing module links

- Structured data references (item URLs, breadcrumb lists)

Update the templates and the CMS content. Template links update instantly. Hardcoded in-body links in thousands of legacy articles usually do not, and they are often the last holdouts in a migration cleanup.

Transitional tactics

During a migration, some teams temporarily keep the old URL inventory accessible in monitoring tools or a separate file to validate that every old URL has a working redirect. That is a legitimate operational tactic, not a universal requirement. The production sitemap shipped at the new URL should stay clean and canonical-first. Do not confuse internal migration monitoring with public discovery signals.

Pillar 3: robots.txt and crawl access

The single highest-impact post-launch incident is a production robots.txt that blocks the new site. It happens more often than teams admit: staging rules ship with the deploy, a new CMS inserts its default disallow list, an overly broad pattern matches more than intended, or the file is served from the wrong path after an infrastructure change.

Post-migration, verify at minimum:

https://new-domain.com/robots.txtreturns 200 withtext/plaincontent-type.- The homepage is allowed (

Allow: /or the absence of a blocking rule). - Each critical template is allowed: product pages, category pages, article pages, landing pages, pagination.

- New path patterns introduced by the migration (for example,

/shop/,/blog/, localized folders) are not accidentally matched by a generic disallow. - The file contains the correct

Sitemap:directive pointing at the new sitemap URL. - Staging-specific rules such as

Disallow: /orDisallow: /adminare not leaking into production.

Robots.txt is not the only crawl-control layer. Meta robots, X-Robots-Tag HTTP headers, server authentication, and CDN rules can all block crawlers too. The Robots.txt vs. Meta Robots vs. X-Robots-Tag comparison explains which signal to use where. For the most common robots.txt mistakes that ship in migrations, see Robots.txt Mistakes Blocking SEO. To validate the live file, run it through the CodeAva Robots.txt Checker, which parses the rules, tests URL access per user-agent, and extracts the declared sitemap.

Pillar 4: canonical alignment

Canonical tags exist to resolve duplicate-URL ambiguity. On the new site, they must point to the new preferred URLs — not to the old domain, not to the old URL format, not to a stale template default from the previous stack.

The migration-critical checks:

- Self-referencing canonicals on the new preferred URLs by default.

- No old-domain canonicals. After a domain move, canonicals pointing to the old host directly contradict the redirects.

- Absolute URLs in canonical tags, with the correct protocol and host.

- Consistency across the stack. The canonical, the internal link, the sitemap entry, and the redirect destination must all agree on the same URL.

- No canonical to a redirected URL. A canonical pointing at a URL that 301-redirects creates a loop of conflicting signals.

Server-rendered canonicals are easier to audit than client-injected ones. If your stack renders canonical tags after hydration, verify that the value delivered in the initial HTML matches the final DOM. For JavaScript and headless stacks specifically, see Canonical Tags for JavaScript & Headless Websites.

Soft 404 clusters: the migration mistake that looks “safe”

The most damaging migration shortcut is catching every orphan redirect with a fallback to the homepage or a generic category page. Operationally it looks safe: no URL ever returns a 404, the redirect map is trivially complete, and the rollback story is easy.

Technically, it is not safe. If the redirect destination is not a meaningful replacement for the original URL, Google will classify it as a soft 404. The old URL does not consolidate onto the new destination, the new destination picks up noisy signals, and the migration recovers more slowly.

A practical framework:

- Old product → equivalent new product: good. Direct 1:1 replacement of intent.

- Old product → parent category with that product consolidated into it: acceptable, provided the category really is the intended replacement (same brand, family, intent).

- Old product → unrelated category: poor. The destination does not satisfy the original intent.

- Old product → homepage: almost always soft-404 territory. Use only when the URL had essentially no useful content or traffic to preserve.

- Old URL with no replacement: return a real

404or410. An honest “this is gone” is better for crawl efficiency and signal clarity than a fake redirect.

The most dangerous migration shortcut

Response quality and crawl efficiency

Correctness is the first gate; response quality is the second. A redirect that works but takes two seconds to resolve is still a problem during a migration. Crawlers budget time per host, and site moves often increase crawl demand while the new architecture is being mapped — so poor response behavior becomes more visible, not less, during transition periods.

Check for:

- Redirect latency. 301s should be near-instant at the edge. Slow redirects usually mean the app is booting or a database lookup is happening inside the redirect path.

- Redirect chains. Three hops take three network round trips. Collapse them where possible.

- App-boot delays. Cold serverless functions, slow CMS rendering, or full-page server components with uncached dependencies can make the final 200 response slow even when the redirect chain is clean.

- Unstable infrastructure. 5xx spikes during the migration window are far more visible to crawlers because they are visiting more URLs more often.

For small sites, “crawl budget” is rarely the limiting factor — most pages get crawled often enough. For large sites or large migrations, crawl efficiency is a first-class concern. Tight, fast, predictable responses shorten the consolidation window regardless of site size.

Search Console checks after migration

Verify every relevant property. If you changed domain or protocol, you likely need a new Search Console property for the new host, and you should keep the old property verified for at least the monitoring window. If a full domain move applies and the Change of Address workflow is still relevant in your configuration, use it.

What to inspect in the weeks after launch:

- Page Indexing reporton the new property. Watch for spikes in “Not found (404)”, “Soft 404”, “Redirect error”, “Server error (5xx)”, and “Duplicate without user-selected canonical”.

- Sitemap submission status. Submit the new sitemap. Confirm it is read successfully. Track submitted vs indexed counts over time.

- URL Inspection on representative templates: homepage, a product, a category, an article, a paginated page. Confirm Google sees the correct canonical, meta robots, and rendered content.

- Crawl Stats(Settings → Crawl Stats). Watch response-time and response-code distributions. Sudden shifts toward slow or error responses indicate an infrastructure regression.

- Performance report. Track impressions and clicks per URL group. A well-executed migration will show the old URLs trailing off and the new URLs picking up equivalent impressions within weeks.

For the “discovered but not indexed” and “crawled but not indexed” statuses that often spike during migrations, see the diagnosis workflows in Discovered – Currently Not Indexed fixes and Crawled – Currently Not Indexed fixes. Both are common post-migration signals, and their causes overlap heavily with the pillars in this guide.

The post-migration crawlability checklist

Work through these in order. Each step assumes the previous one is clean; fixing canonicals before fixing redirects is almost always wasted effort.

- Confirm every old URL redirects permanently to the best new equivalent. Sample at least the top 1–5 percent of old URLs by traffic, and at least one URL from every template. Use a tool that follows the full HTTP chain.

- Remove redirect chains wherever possible. Rewrite rules so old URLs land on the final destination in a single hop.

- Spot-check old high-value URLs manually to confirm the new destination is a meaningful replacement, not a soft-404-prone fallback.

- Confirm the new sitemap contains only canonical 200 URLs, served with absolute URLs and a valid

lastmod. - Confirm robots.txt allows crawling of the new structure, with no leftover staging rules and a correct

Sitemap:directive. - Confirm canonicals self-reference the preferred new URLs and never point to the old domain or a redirected URL.

- Confirm internal links— navigation, breadcrumbs, pagination, in-body content — point directly to the new preferred URLs.

- Replace homepage redirects with real mappings or real 404/410 responses wherever the current destination is not a meaningful replacement.

- Inspect Search Console indexing, sitemap, URL Inspection, and Crawl Stats reports. Investigate every spike.

- Re-crawl key templates and legacy URL samples after fixes to confirm the live output matches the intent. Repeat until the sampled URLs are clean.

Validate in live HTTP, not just in staging

Common migration mistakes teams make

- Using 302s for a permanent migration. Signals the move is temporary and delays consolidation.

- Keeping redirect chains in placebecause “Google can follow them anyway.” It can, but every hop adds latency and auditing noise.

- Redirecting large numbers of old URLs to the homepage. Soft-404 risk at scale; weak consolidation of old ranking signals.

- Launching with staging robots.txt rules. The single highest-impact post-launch incident.

- Shipping stale canonicals that still point to the old domain or the old URL format.

- Submitting dirty sitemaps full of redirected, 404, or non-canonical URLs.

- Updating templates but forgetting internal links in CMS content, structured data, and marketing modules.

- Ignoring Search Console after launch. Problems that are obvious at week one often take weeks or months to resolve once they compound.

- Treating migration as “done” at launch. The migration is only done when new URLs are consolidated and indexed at a level consistent with the old ones.

Post-migration audit cheat sheet

One row per audit area. Use it as a reviewer’s checklist during a launch readiness pass or a post-launch incident triage.

| Audit area | What good looks like | Common failure | Impact on crawlability |

|---|---|---|---|

| Redirects | Single-hop permanent server-side 301/308 to the best new equivalent. | 302s, JS redirects, long chains, irrelevant destinations. | Slower consolidation of signals; higher soft-404 risk. |

| Sitemap | Only canonical, indexable 200 URLs. Absolute paths. Valid lastmod. | Old URLs, redirected URLs, noindex URLs, relative paths. | Noisy discovery; misleading coverage reports. |

| Robots.txt | Allows all public templates; declares the new sitemap; no staging rules. | Disallow: /, overly broad patterns, missing sitemap directive, wrong file path. | Can block entire sections of the new site from being crawled. |

| Canonical tags | Self-referencing, absolute, new-domain, consistent with redirects and sitemap. | Old-domain canonicals, canonicals to redirected URLs, client-injected mismatches. | Conflicting signals delay index consolidation. |

| Internal links | All navigation, breadcrumbs, and in-body links point at the new preferred URLs directly. | Links still hit old URLs and redirect; CMS content not updated. | Extra hops; weaker discovery of the new URLs. |

| Old URL handling | Direct redirects to meaningful equivalents; honest 404/410 for retired URLs with no replacement. | Blanket homepage redirects; “catch-all” category fallbacks. | Soft-404 clusters; weak signal transfer. |

| Search Console monitoring | Old and new properties verified; indexing, sitemaps, crawl stats reviewed weekly. | No verification on new host; no one watches indexing anomalies post-launch. | Regressions compound invisibly; recovery takes months instead of weeks. |

Automate the forensic checks

A good post-migration audit blends manual review with targeted automated checks on the live production response. The CodeAva tooling most relevant to this workflow:

- URL Indexability Inspector — follows the live redirect chain, parses final response headers, extracts meta robots, X-Robots-Tag, canonical, and hreflang, and checks robots.txt crawlability hints for the host. Use it per URL for deep diagnostics on representative old and new URLs.

- Robots.txt Checker — validates the live robots.txt, tests URL access per user-agent, and extracts the declared sitemap URL. First thing to run on launch day.

- Sitemap Checker — parses the new sitemap XML, flags duplicates, relative URLs, malformed entries, and surfaces fixes before Search Console sees the noise.

- Website Audit — quick technical-hygiene snapshot of a URL: HTTP status, metadata, canonical, H1, Open Graph, Twitter Card, security headers, robots.txt and sitemap.xml reachability. Useful for the homepage and a handful of key templates as part of a launch-readiness pass.

These tools do not replace a site-wide crawler or Search Console. They complement them by giving fast, reliable per-URL and per-file diagnostics on the live public response, which is what search engines actually consume.

A migration is done when crawlers agree it is done

Shipping the new site is not the end of the migration; it is the start of the consolidation window. Work is finished only when search engines can reliably discover, fetch, and consolidate the new URLs — and that requires every signal the site sends to agree on the same set of preferred destinations.

The core audit areas do not change: redirect integrity, discovery via sitemaps and internal links, robots-level crawl access, and canonical alignment, with disciplined Search Console monitoring for at least several weeks after launch. Teams that verify the live output quickly and fix inconsistencies before they compound recover in weeks. Teams that do not usually recover in quarters.

When you are ready to audit a specific page, run it through the CodeAva URL Indexability Inspector for a full per-URL signal report, validate the production robots.txt and sitemap, and use the CodeAva Website Audit for a broader hygiene snapshot of the homepage and core templates. For deeper reading on the supporting pillars, see Robots.txt Mistakes Blocking SEO, XML Sitemap Best Practices, and Canonical Tags for JavaScript & Headless Websites.