Most engineering teams know they should audit their code. Far fewer actually do it consistently — and the ones that skip it tend to discover why it matters at the worst possible moment: a production outage, a security breach, or an on-call engineer staring at a codebase they no longer recognise.

This is not a post about discipline. It is about economics. Unreviewed code accumulates technical debt the way interest accumulates on a credit card: slowly at first, then all at once. A code audit is the mechanism that makes the debt visible before it becomes unserviceable.

What a code audit actually examines

A code audit is not a line-by-line read-through. Done well, it is a structured analysis across four domains:

- Security vulnerabilities — hardcoded credentials, eval() usage, unvalidated inputs, SQL injection vectors, and insecure dependencies.

- Code quality — cyclomatic complexity, deeply nested logic, missing error handling, and functions that do too much.

- Maintainability — dead code, unused exports, inconsistent patterns, and inadequate test coverage.

- Dependency health — outdated packages, known CVEs, and dependencies that are heavier than necessary for their use case.

Most teams focus on the first category when they think about audits. The others are where the slow-burn costs hide.

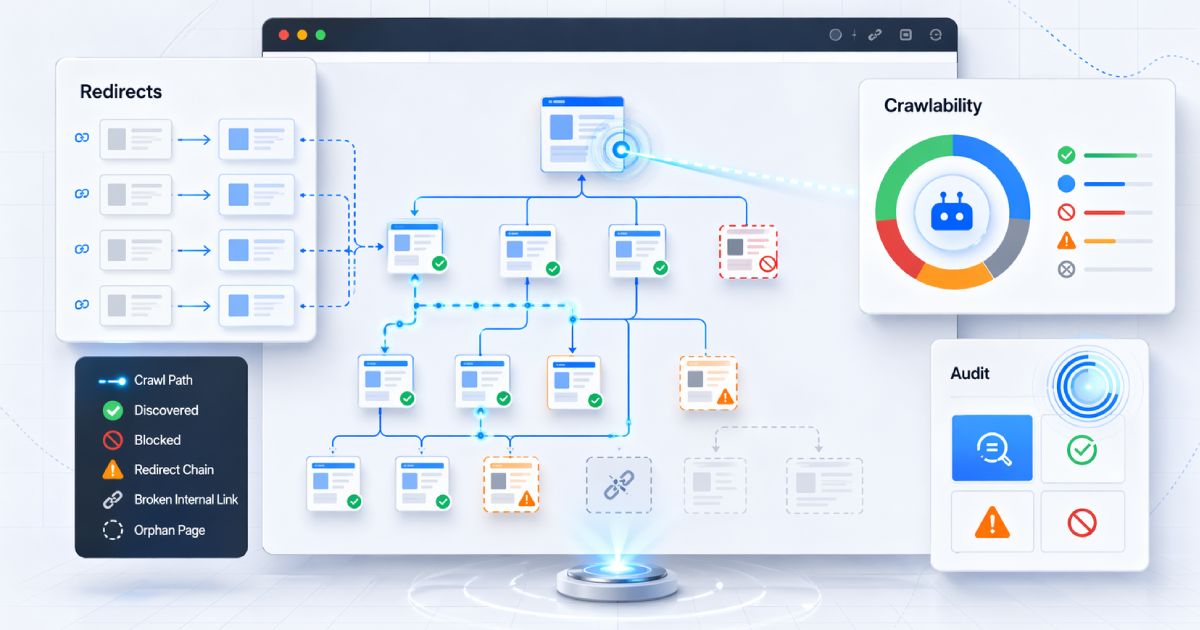

The four categories of findings

Good audit tooling classifies findings rather than dumping a flat list of problems. A sensible severity model looks like this:

- Critical — must be fixed before the next deploy. Security risks, potential data loss, confirmed vulnerability patterns.

- Warning — should be addressed in the next sprint. Reliability risks, significant complexity, deprecated patterns.

- Info — worth tracking over time. Style inconsistencies, minor inefficiencies, improvement opportunities.

- Passed — explicitly confirmed safe. Showing what passed is as important as showing what failed; it builds confidence and documents your baseline.

Start with automated triage

When to audit

There is no single right cadence, but there are clear trigger events where the return on investment is highest:

- Before a major release or launch — the highest-stakes moment to have a clean bill of health.

- Before a significant refactor — you need to know what you are working with before you change it.

- After team growth — new engineers bring new patterns. What is consistent with three engineers becomes fragmented with twelve.

- After a production incident — a post-mortem is incomplete without checking whether the class of issue that caused the incident exists elsewhere in the codebase.

- Quarterly as a baseline — even if nothing dramatic has happened, drift accumulates. A regular pulse check keeps technical debt from compounding silently.

Automation vs manual review

These are not alternatives — they are layers in a defence-in-depth approach to code quality.

Automated static analysis is fast, consistent, and tireless. It catches the same class of problems every time without reviewer fatigue. It should run on every pull request and every commit to main.

Manual review catches what automation cannot: architectural drift, incorrect business logic, surprising side effects, and the kind of clever-but-wrong code that passes all the linters but fails the first time a user does something unexpected.

Do not confuse passing automation with quality

Quick wins that pay off immediately

Not every audit finding requires a multi-sprint remediation effort. The highest-ROI fixes are often trivial to ship:

- Remove debug statements (

console.log,debugger) before they leak sensitive data in production logs. - Rotate any hardcoded credentials immediately and move them to environment variables.

- Delete dead code — unused functions, unused exports, commented-out blocks — that adds cognitive load without providing value.

- Add a linting rule (

no-eval,no-console) to prevent the same class of issue from recurring. - Update the five most outdated dependencies, focusing on those with known CVEs first.

How to prioritise findings

When an audit surfaces 40 findings across four severity levels, the temptation is to either fix everything at once or fix nothing. Neither works. A practical prioritisation model:

- Fix all critical security findings in the same sprint they are discovered, regardless of other priorities.

- Schedule warnings that affect reliability for the next planned sprint.

- Fold maintainability improvements into related work — if you are touching a function anyway, clean it up while you are there.

- Use info-level findings as a backlog for slow days and onboarding tasks.

The goal is not a zero-finding codebase — that is an unrealistic target for any living system. The goal is a codebase where critical issues are addressed promptly, the trend is improving, and the team has clear visibility into what they are working with.