Automated accessibility testing is one of the most underinvested areas of modern web development. Most teams either do not test at all, run a Lighthouse audit once at launch, or assume that because their design system is \u201caccessible\u201d the finished product will be too. None of these approaches hold up.

The core constraint to understand is this: automated tools can reliably detect only 30\u201340% of WCAG success criteria violations. That is a useful 30\u201340% \u2014 it catches the obvious, consistent, high-volume issues. But it means the majority of accessibility problems in a real application require human judgement to find.

The goal of a good accessibility testing strategy is not to automate everything. It is to automate the right things, consistently, so your engineers can focus their manual effort on the issues that matter most.

What automated testing catches well

Automated tools excel at finding violations that are structurally deterministic \u2014 issues that can be confirmed by examining the DOM without needing to understand user intent.

- Missing alternative text on images (

imgwithoutalt, or with an emptyaltwhen the image is informative). - Form inputs without labels \u2014 no associated

<label>, noaria-label, noaria-labelledby. - Colour contrast failures where the ratio is clearly below 4.5:1 for normal text or 3:1 for large text.

- Missing document structure \u2014 absent

langattribute, skipped heading levels, no landmark regions. - Invalid ARIA usage \u2014 roles without required child elements, orphaned

aria-labelledbyreferences. - Interactive elements that are not keyboard focusable \u2014 click handlers on

<div>elements withouttabindexor an appropriate role.

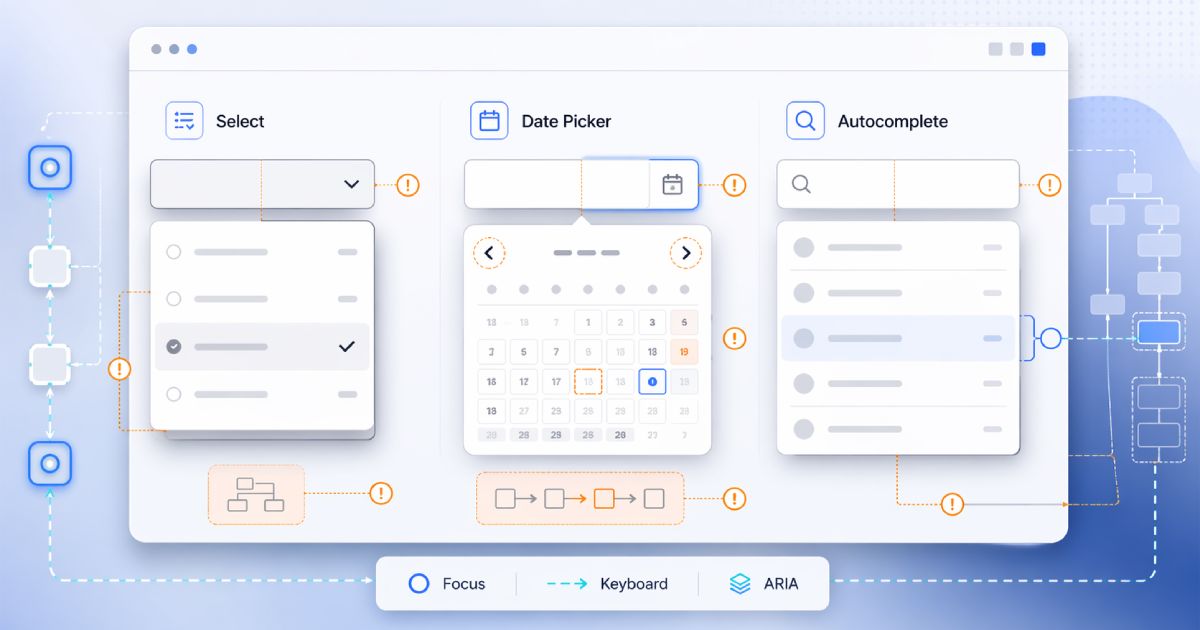

What automated testing misses

This is the category that surprises most teams. Automated tools cannot reliably assess:

- Whether alt text is meaningful. An image can have

alt="image"and pass automated checks while being completely useless to a screen reader user. - Whether the logical reading order (DOM order vs visual order) makes sense when navigated linearly.

- Whether dynamic content updates (modals, toasts, live regions) are announced correctly by screen readers in practice.

- Whether a keyboard-only user can complete a real user journey without getting trapped or confused.

- Cognitive accessibility \u2014 clarity of language, consistency of navigation, and whether error messages actually help users recover.

A passing automated scan is not a compliance certificate

The recommended tool stack

For most teams, a three-layer approach covers the majority of automatable issues:

- axe-core via

@axe-core/playwright\u2014 run full-page accessibility analysis as part of your E2E test suite. This catches the widest class of DOM-level violations after JavaScript has fully rendered. - jest-axe or vitest-axe \u2014 run component-level checks in unit tests. Useful for catching violations in isolated components before they reach integration.

- Lighthouse CI \u2014 as a CI gate to catch regressions on your most important pages. Set a minimum accessibility score (95+ is achievable for well-maintained apps) and fail the build if it drops.

Add axe to Playwright in under 10 minutes

@axe-core/playwright, then add this to your test setup:const results = await new AxeBuilder({page}).analyze();

expect(results.violations).toEqual([]);Run it against your three most-trafficked routes to start. Expand coverage as your team builds confidence.

Setting up Playwright accessibility tests in a Next.js project

Assuming you have Playwright already configured, add the axe integration:

- Install the package:

npm install --save-dev @axe-core/playwright - Import in your test file:

import { AxeBuilder } from '@axe-core/playwright' - After navigating to a page, run:

const results = await new AxeBuilder({ page }).analyze() - Assert:

expect(results.violations).toEqual([])

Start with your homepage, sign-in flow, and your primary product page. These three cover the most user-facing surface area and will surface the most impactful violations quickly.

Manual testing checklist to complement automation

Once your automated layer is in place, supplement it with these manual checks on each major page type:

- Keyboard navigation \u2014 tab through the entire page. Confirm every interactive element is reachable, the focus order is logical, and there are no focus traps outside of modals.

- Screen reader test \u2014 navigate with VoiceOver (macOS/iOS) or NVDA (Windows) and confirm the announced content is meaningful at every step.

- Zoom to 200% \u2014 confirm the layout does not break or obscure content at double the default zoom level.

- Colour contrast with real context \u2014 check overlaid text on images and gradients, which automated tools often miss.

- Complete a key user journey without a mouse \u2014 sign up, add to cart, submit a form. This single check surfaces more issues than any automated scan.

Integrating into CI without blocking everything

The most common failure mode when teams try to add accessibility CI gates is over-scoping the initial check: they audit every route, the gate fails with 200 violations, and the team disables it within a week.

A more effective approach:

- Start with only your three most important pages and a critical-violations-only threshold. Let the gate pass for now.

- Fix violations found on those three pages. Then tighten the threshold to include warnings.

- Expand coverage to five, then ten pages as your baseline improves.

- After a quarter, your accessibility scan is part of normal CI and the violation rate is low enough that the gate has meaningful signal.

Accessibility is a practice, not a milestone. The goal is a continuous improvement loop \u2014 automated checks that catch regressions, manual reviews that find what automation misses, and design patterns that prevent violations from being introduced in the first place.